Shazam Style Automatic Signal Identification via the Sigidwiki Database

Thank you to José Carlos Rueda for submitting news about his work on converting a "Shazam"-like Python program made originally for song identification into a program that can be used to automatically identify radio signals based on their demodulated audio sounds. Shazam is a popular app for smartphones that can pull up the name of any song playing within seconds via the microphone. It works by using audio fingerprinting algorithms and a database of stored song fingerprints.

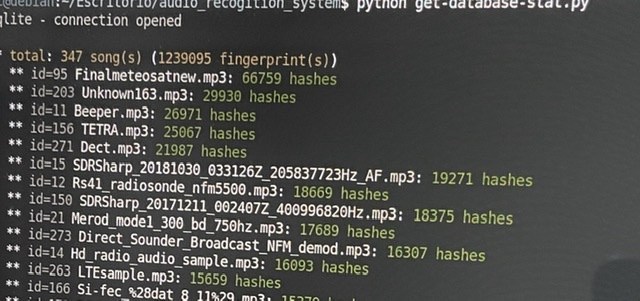

Using similar algorithm to Shazam, programmer Joseph Balikuddembe created an open source program called "audio_recogition_system" [sic] which was designed for creating your own audio fingerprint databases out of any mp3 files.

José then had the clever idea to take the database of signal sounds from sigidwiki.com, and create an identification database of signal sounds for audio_recogition_system. He writes that from his database the program can now identify up to 350 known signals from the sigidwiki database. His page contains the installation instructions and a link to download his premade database. The software can identify via audio that is input from the PC microphone/virtual audio cable or from a file.

Is it possible to run the script on windows? I have tried but i have no idea about how to make it work

Did anyone get the file input working. The python doesn’t look like it’s complete.

Tested using microphone with TETRA and working

Hello,

Good job, works fine.

Possible to have an android/mic client working on lan/wan ?

This sport is not only at home:)

Thx you for posting

Tested and working. Some problems to use “line” instead of “mic”, but finally ok. Amazing good idea.

this function should be added to the Artemis 3 Signal Identification Software.

Yes, this could be an excellent idea! We already discuss this idea in the past: in Artemis Mk.2 was a nightmare because of the entirely different programming languages (Python and VB). With Artemis Mk.3 the story is completely different. Unfortunately, we discussed this feature during the launch of Artemis 3 and we were literally swamped with bug fixing and website problems. Nowadays the situation is way more stable and so we can resuscitate the idea. Returning to what you proposed: it is worth to mention also a work from Randaller (https://github.com/randaller/cnn-rtlsdr) that take advantage of deep learning to distinguish among different signals. This is a nice alternative but, thanks to José, we know that the code of Baliksjosay, wich use hashes generated from sFF transform (https://github.com/baliksjosay/audio_recogition_system) is working with radio signals. Now, a fair question could be the effective reliability (but this is probably directly related to the quality of the database). Anyway: stay tuned! Highly chances to have good news from us.