Automatic Signal Recognition with AI Machine Learning and RTL-SDR

Thank you to Trevor Unland for submitting his AI machine learning project called "RTL-ML" which automatically recognizes and classifies eight different signal types on low-power ARM processors running an RTL-SDR.

Trevor's blog post explains the machine learning architecture in detail, the accuracy he obtained, and how to try it yourself. If you try it for yourself, you can either run the pre-trained model or train your own model if you have sufficient training data.

The code is entirely open source on GitHub, and the training set data has been shared on HuggingFace.

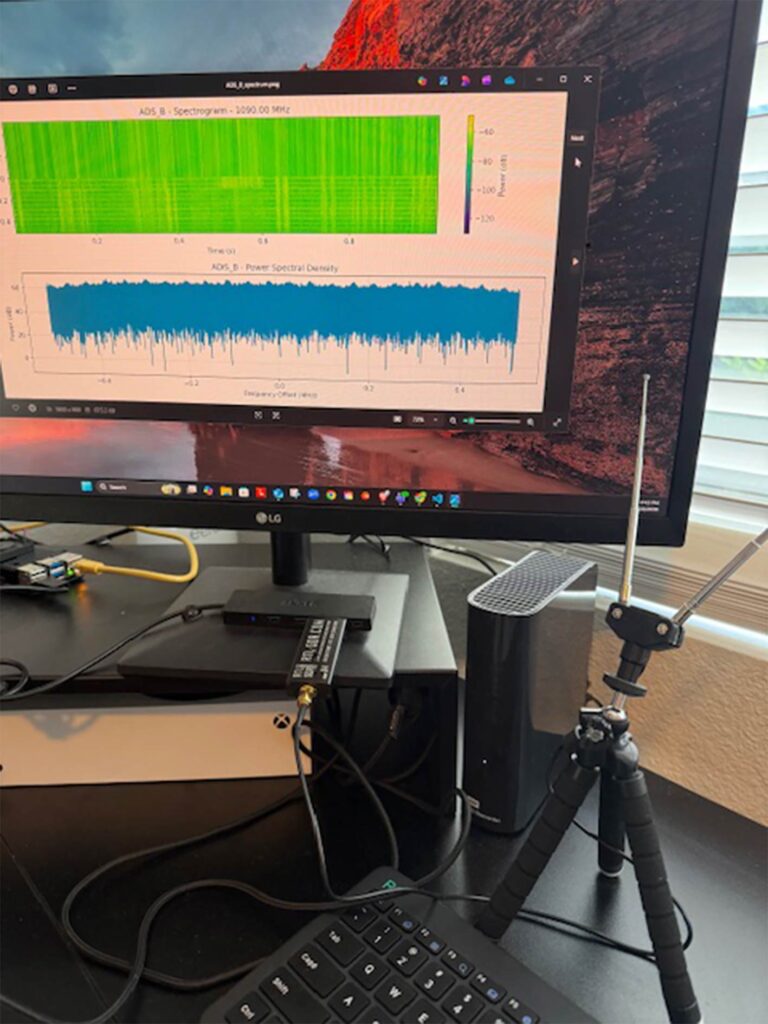

RTL-ML is an open-source Python toolkit for automatic radio signal classification using machine learning. It runs on ARM single-board computers like the Raspberry Pi 5 or Indiedroid Nova paired with an RTL-SDR Blog V4, achieving 87.5% accuracy across 8 real-world signal types including ADS-B aircraft transponders, NOAA weather satellites, ISM sensors, FM broadcast, NOAA weather radio, pagers, and APRS.

The project provides a complete pipeline from signal capture to trained classifier. Unlike academic approaches that rely on synthetic data or expensive GPU hardware, RTL-ML uses real signals captured from actual antennas and runs entirely on edge hardware with no cloud dependency. The Random Forest model is 186KB and processes signals in around 120ms on a Pi 5.

The GitHub repository includes the full capture and training scripts, a pre-trained model, 8 validated spectrograms, and documentation for adding new signal types. It works out of the box on both Raspberry Pi 5 and Indiedroid Nova with identical code and accuracy.

You might also be interested in some similar projects we've posted about in the past, such as this Shazam-style signal classifier, which used audio data from sigidwiki.com, and an Android app doing the same thing (which unfortunately now appears to have been removed from Google Play). There is also this deep learning based signal classifier model.