A Review of the SDRplay RSP1A

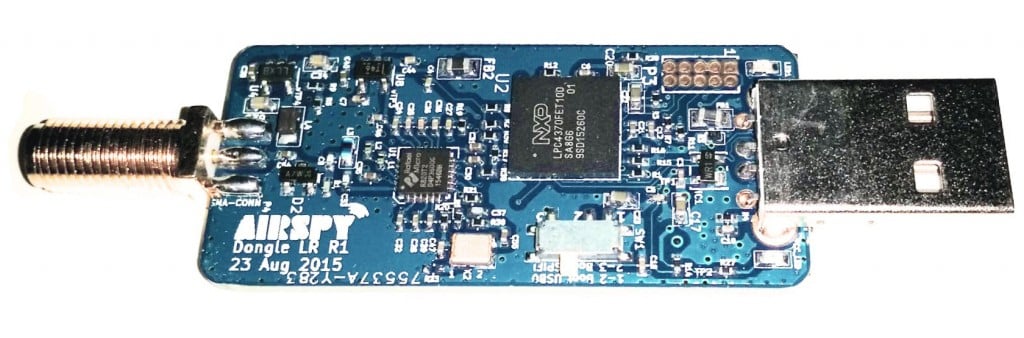

Yesterday the SDRplay team released the $99 US RSP1A, which is a revision of the RSP1A. In this post we present a review comparing its performance against the older RSP1 and the currently selling $169.95 US RSP2. We aim to mainly show demonstrations of improvements that we've found on the RSP1A in areas where we discovered problems on the RSP1 or RSP2.

Discussion of Improvements

First we present a discussion on the improvements made.

TCXO: The first noticeable improvement is that the RSP1A now comes with a 0.5PPM TCXO. This was one of the main criticisms of the RSP1 as the RSP1 only came with a standard oscillator which can drift as the temperature changes. But as mentioned in our previous review that included the RSP1, the drift was fairly small after warmup due to the good heat dissipation of the large PCB, and the relatively low power usage and thus less heating of the Mirics chips used on RSP units. Nevertheless, a TCXO is a good upgrade and brings it back in line with most low cost SDRs on the market now.

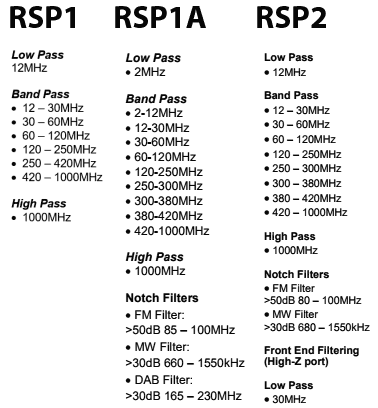

Enhanced RF Preselectors + Notches: Strong out of band signals can overload an SDR causing problems like imaging and reduced sensitivity. Preselectors are RF filters which help to filter out unwanted signals for the band that you are listening to.

The RSP1 had 8 preselector bands and the RSP1A brings this number up to 12, which is even more than the 10 preselectors on the RSP2.

In testing we found that the new preselectors certainly do help out a lot. The new 2 MHz low pass and 2 - 12 MHz certainly help to reduce interference from the MW broadcast AM band. Changes in the VHF filters reduce problems from strong broadcast FM and DAB stations. The filters have also been sharpened considerably making the existing filters even more effective. The RSP-1 in some cases suffered quite severely from out of band signal interference, and the RSP-2 made it a bit better, but the RSP-1A solves the interference problem much more.

The new FM/AM and DAB notch filters also do a good job at notching out these often problematic very strong signals.

Improved LNA Architecture: In the RSP1 the front end LNA could only be turned on or off. Turning it on reduces the noise figure and improves performance, especially at UHF frequencies. The single gain step was problematic as often the LNA could overload on strong signals if turned on. The RSP1A introduces a gain control block which allows the LNA to have variable gain steps.

This new architecture helps to maximise the dynamic range of the RSP1A, thus reducing overloading.

Extended frequency coverage down to 1kHz: The lower limit of the RSP1 was 10 kHz, so really low LF reception is now available on the RSP1A.

Bias-T: Just like with the RSP2, the bias-t allows you to power external devices over the coax cable. Such as remote LNAs, switches etc. Running a good LNA next to the antenna is optimal, as this helps push signals through the coax cable losses.

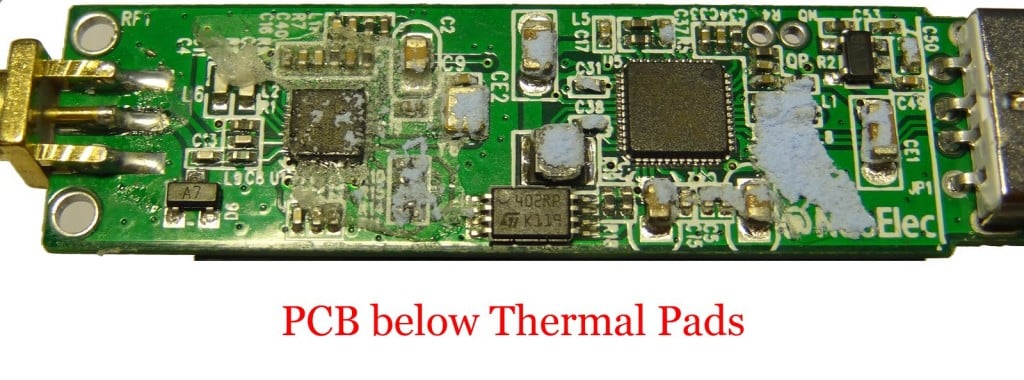

RF Shielding: Like the RSP2 the plastic case is now spray painted with metallic paint on the inside. This works almost as well as a full metal case to shield from unwanted signals entering directly through the PCB, instead of through the antenna. We do still notice some leakage making its way in through the coax shield, but it is relatively minor with the shielding.

ADC Resolution Increased to 14-bits: The RSP1A uses the same ADC chip as the RSP1, but now has unlocked 14-bit ADC capability for bandwidths below 6 MHz thanks to onboard decimation and oversampling. So now 14-bit data comes directly into the PC if using a bandwidth below 6 MHz. Further decimation can still be achieved within software like SDRuno.

A higher bit ADC can improve dynamic range, meaning that strong signals are less likely to overload the SDR.

We asked SDRplay how 14-bits was achieved with the same chips used by the RSP1 and they explained that it is through oversampling and decimation onboard the chip. They also wrote the following technical reply which is a very good read (collapsed as the reply is quite long, click on "Read the Reply" to expand):

[expand title = "READ THE REPLY"]

The ADCs on the MSi2500 use a sigma-delta topology where a highly oversampled multi-bit ADC uses decimation filtering to provide the desired resolution. As the original spec for the MSi2500 called for 12 bit resolution, the fact that the converter was capable of delivering 14 bits for final sample rates of less than 6.048 MHz was ignored. Working with the Mirics team, we have been able to unlock the extra two bits of resolution that the MSi2500 was always capable of delivering. Using sample rates above 6.048 MHz, the ADC defaults back to 12 bit resolution.

They also explained:

If we take an 8 bit ADC for example, we can expect around 48 dB of instantaneous dynamic range. This will most likely be far lower than that achievable from the RF front end whose dynamic range will be influenced by factors such as noise figure, intermodualtion, cross modulation and synthesizer phase noise (reciprocal mixing). A decent tuner front end should be capable of delivering 65-70 dB of instantaneous dynamic range, which is also roughly what you can expect from a 12 bit ADC. In other words, we believe that in the RSP1, the instantaneous dynamic range of the tuner and ADCs were approximately the same. The limitation that the RSP1 had was because of the single gain step in the LNA, it was not always possible to utilise the available dynamic range in the most effective way. The RSP1A gives much greater (and finer) control over the RF gain and this allows for better alignment of the signal level into the tuner to better exploit the available dynamic range. In our tests in the broadcast FM band, we believe that the RSP1A gives around 10 dB more ‘usable’ dynamic range than the RSP1. In other words, if we combine multiple controlled modulated signals (for RF signal generators), with real weak off-air signals, the RSP1A is capable of handling interferers that are around 10 dB greater than the RSP1. Benchmarking against other products, in our tests, the RSP1A seems to give better performance now than anything else in the same price range, both in terms of sensitivity and in terms of in-band overload performance.

The RSP1 always gave very good sensitivity but in optimising it in this way, we gave up some performance in terms of in-band overload performance. Our objective with the RSP1A was to address this without sacrificing sensitivity.

Now, going back to the issue of 14 bits vs 12 bits and instantaneous dynamic range. If we increase the ADC dynamic range from 12 to 14 bits, then the ADC dynamic range should no longer influence the performance of the receiver. Indeed, it is our view, that for any receiver that needs to use a tuner as part of the front end (and any receiver that operates across the frequency range of the RSP will have to use a tuner for the foreseeable future), there is little benefit to be gained with ADC resolutions in excess of 14 bits, as to utilise the extra dynamic range that a higher resolution ADC can give, a much higher performance tuner would be required. Tuner technology has come a very long way in the last 10-15 years and the performance of modern integrated devices is actually very good. To get 12 dB of better dynamic range from a tuner is extremely difficult and can really only be achieved by using very much greater levels of power and esoteric semiconductor technologies. One possible area where you might see better performance is where you have multiple strong interfering signals to the extent that the RF gain needs to be turned down to such a level that the ADC quantisation noise effectively limits the noise floor of the receiver. In this case, you ought to see improved performance in 14 bit mode when compared to 12 bit mode, but please note that the improvement may only be a few dBs in the weak signal reception. If the noise floor of the receiver is still limited by the external LNA, then improved ADC dynamic range will give no perceptible improvement whatsoever.

A direct sampling receiver that does not use a tuner should in principle allow greater dynamic range than one that does, but in practice any direct sampling ADC needs some form of external low noise amplification to ensure a reasonable noise figure and the dynamic range (noise, intermodulation performance etc) of this external amplification block becomes a limiting factor. This is certainly true at VHF and above. At HF, as you will be well aware, the receiver noise figure is not really very important because the atmospheric noise floor is so high. In principle, you might therefore think that our best approach would be to bypass the tuner and use the decimated 16 bit performance of our ADCs. This would still give an effective receiver bandwidth of 375 kHz with 16 bit performance. The reality though is that the real dynamic range of signals coming into the antenna is limited by propagation conditions and atmospheric noise. It is rare to find signals that are above the atmospheric noise floor that vary by more than 60 dB. In practical terms, we believe that equivalent performance can be achieved, simply by the addition of RF pre-selection and AM-band notch filters and in this way we avoid some of the other compromises of direct sampling systems.

So, in a nutshell, when transitioning from 12bit mode to 14 bit mode, don’t expect to see 12 dB more dynamic range. In the real world, it doesn’t work this way. This is why 12 bit devices can give quite favourable performance to higher end 16 bit SDRs such as the Elad FDM-S2, particularly when you consider the difference in cost. We fully expect the Elad to be better, but the difference will not be 24 dB or anything close to it.

Without wishing to labour the point about myths and misunderstandings, it is worth adding a bit of clarification regarding the term ‘dynamic range’. This is a much misunderstood term which can mean very different things depending upon the circumstances and type of signal being received. There is also a difference between ‘dynamic range’ and ‘instantaneous dynamic range’. If you ask 10 different radio engineers what they mean by the term dynamic range, you are sure to get more than one different answer! Another important point to note is that ADC dynamic range is NOT the same as receiver dynamic range. When referring to ADCs, the term dynamic range generally refers to the Spurious Free Dynamic Range (SFDR). This is measured using a CW tone and refers to the ratio between the maximum RMS signal that the ADC can handle and the largest spur or level or quantisation noise within the ADC bandwidth. This is a measure of both noise and linearity of an ADC. As a case in point, it is worth noting that a 16 bit ADC may not necessarily have a higher SFDR than a 12 bit ADC despite having a greater resolution. The greater resolution will generally result in a lower level of quantisation noise, but not necessarily a lower level of harmonic distortion and spurs. In a multi-channel/multi-signal SDR system a lower level of quantisation noise is generally helpful, even if the SFDR is not better, but is not guaranteed to give better performance if the weak signal of interest happens to fall on top of an ADC spur. Where a single signal occupies the entire ADC bandwidth, it is ONLY the SFDR that matters and not the resolution or quantisation noise. Sometimes you will hear people refer to the Effective Number Of Bits ENOB. ENOB is related to the SFDR in that it is a measure of the maximum SINAD that can be attained with an ADC at a give sample rate and so is also a measure of both linearity and noise performance. ENOB is actually = (SINAD – 1.76)/6.02

In the ADC subsystem used in the RSP, whilst the ADCs are 12 bit at 8 MHz sampling the ENOB is 10.4 (for both I and Q). At lower sample rates, the ENOB improves and gets closer to the idealised performance of the converter.

In a receiver system as a whole, the term dynamic range will generally be interpreted to mean the difference (in dB) between the minimum discernible signal and the maximum level of signal that can be handled. But this is different from the term instantaneous dynamic range, which generally refers to the difference between the minimum discernible signal in the presence of the largest signal that can be handled at the same time. What this ‘number’ is in each case will depend upon the type of signal. So for example, a receiver with a given noise figure and linearity performance will have a different instantaneous dynamic range when receiving a 8 MHz wide 256-QAM CATV signal than when receiving a FM signal that is a few kHz wide. This is simply because the SINR (Signal to Interference + Noise Ratio) requirement for a given BER for a 256-QAM signal is very different than that required for a FM signal and also the peak to average ratio of the two signals is very different.

[/expand]

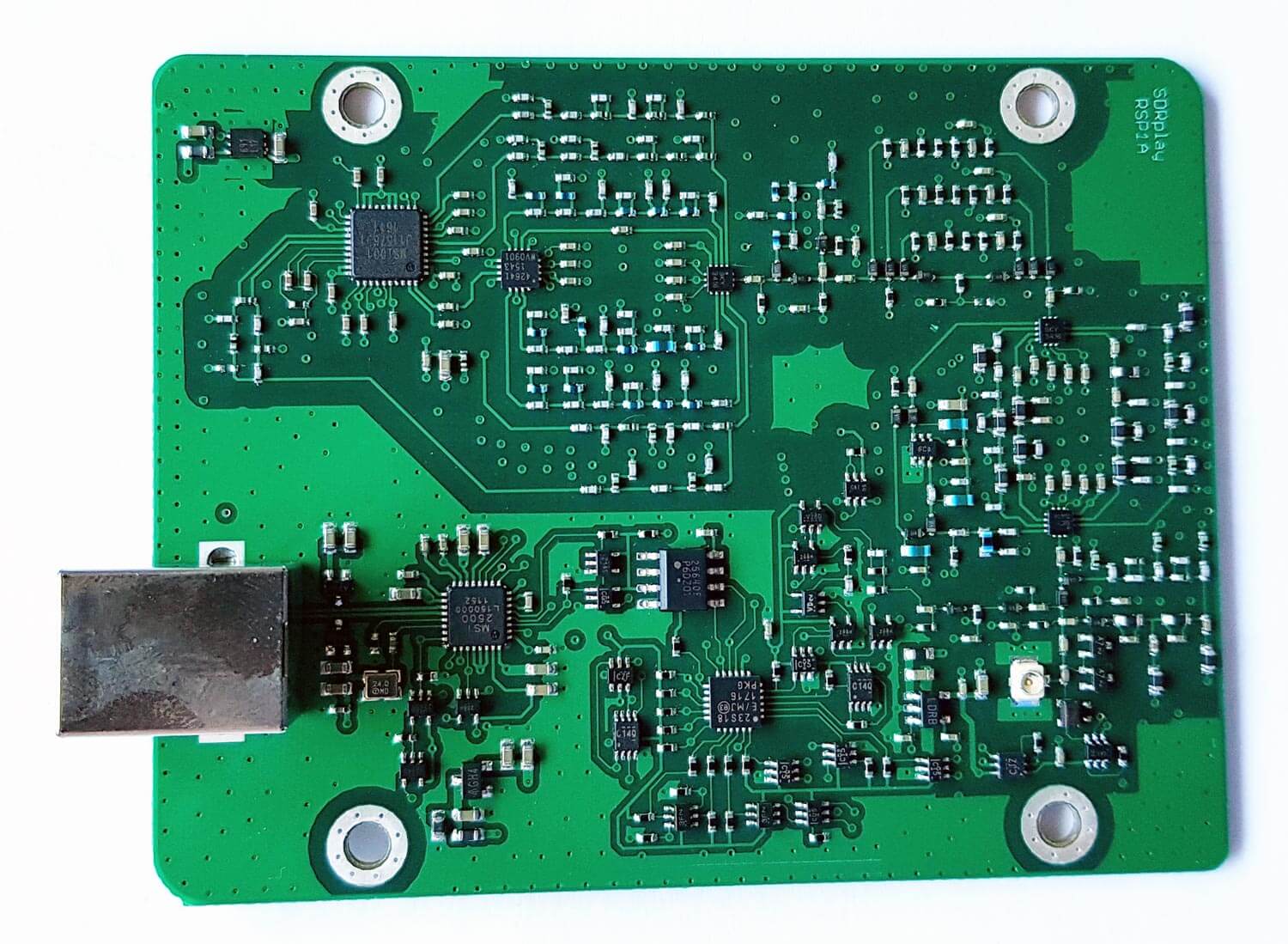

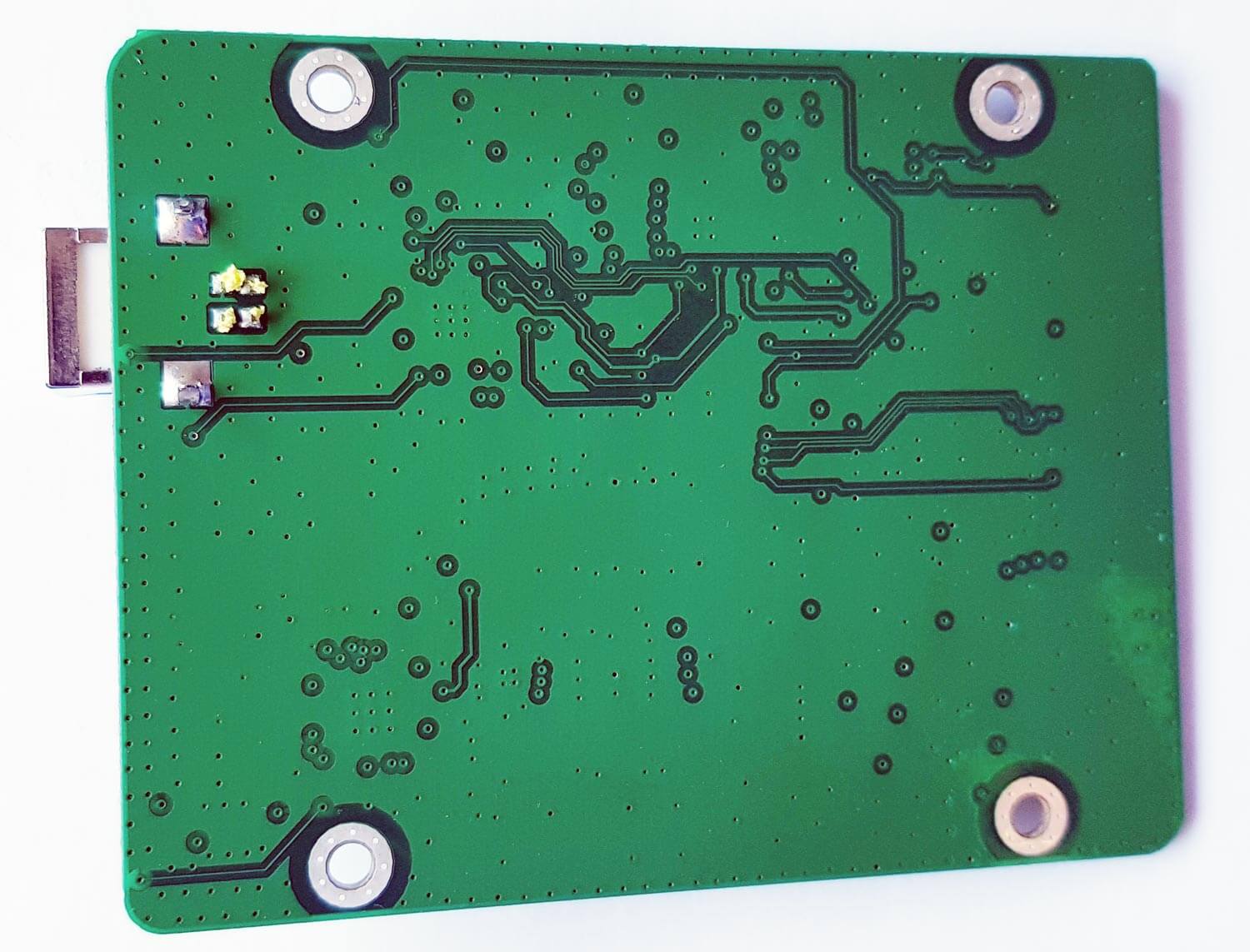

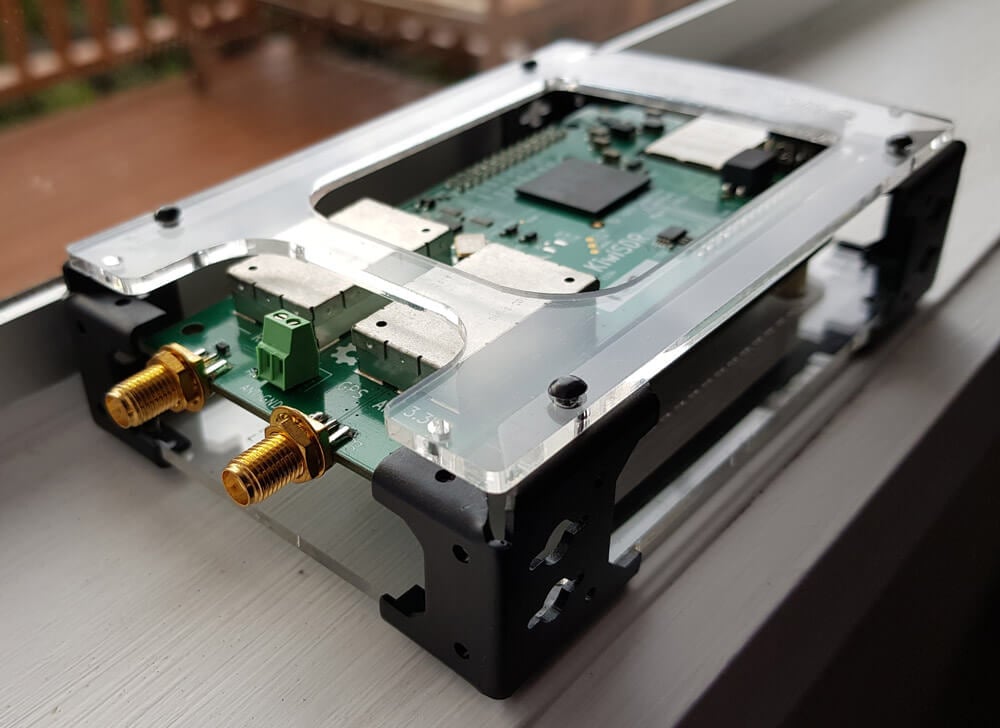

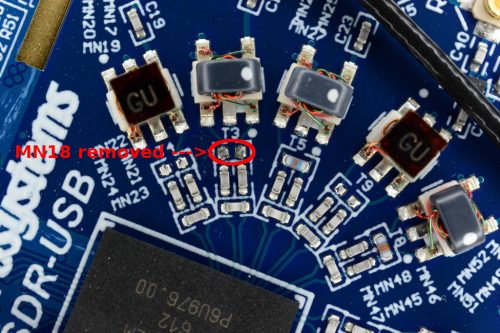

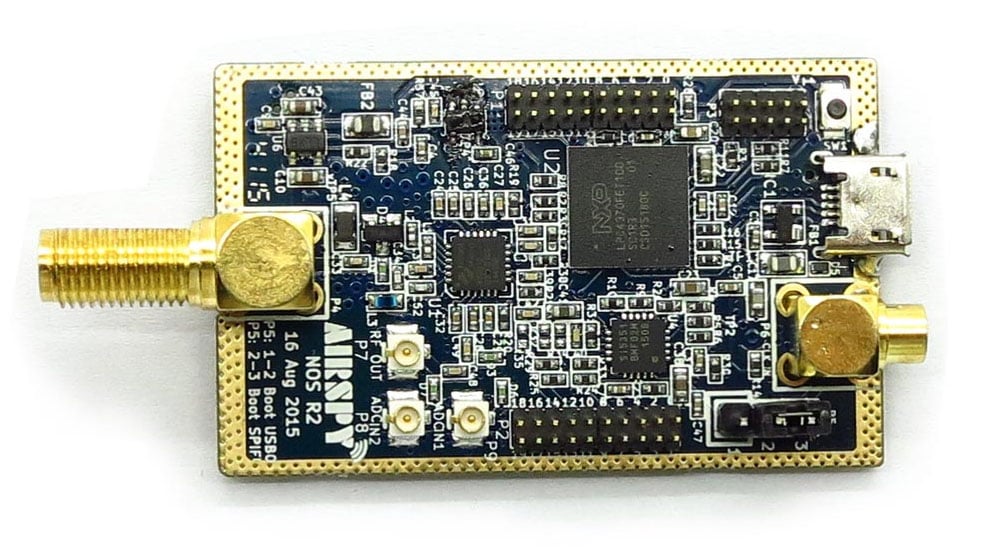

PCB Photos

Compared to the RSP1 the RSP1A PCB is significantly more populated due to the additional filter banks.

Testing the RSP1A

Below we show some screenshots of tests that we made to compare the three RSP units. We focused on bands where the RSP1 or RSP2 had issues, and try to show how much improvement you can get from the RSP1A.

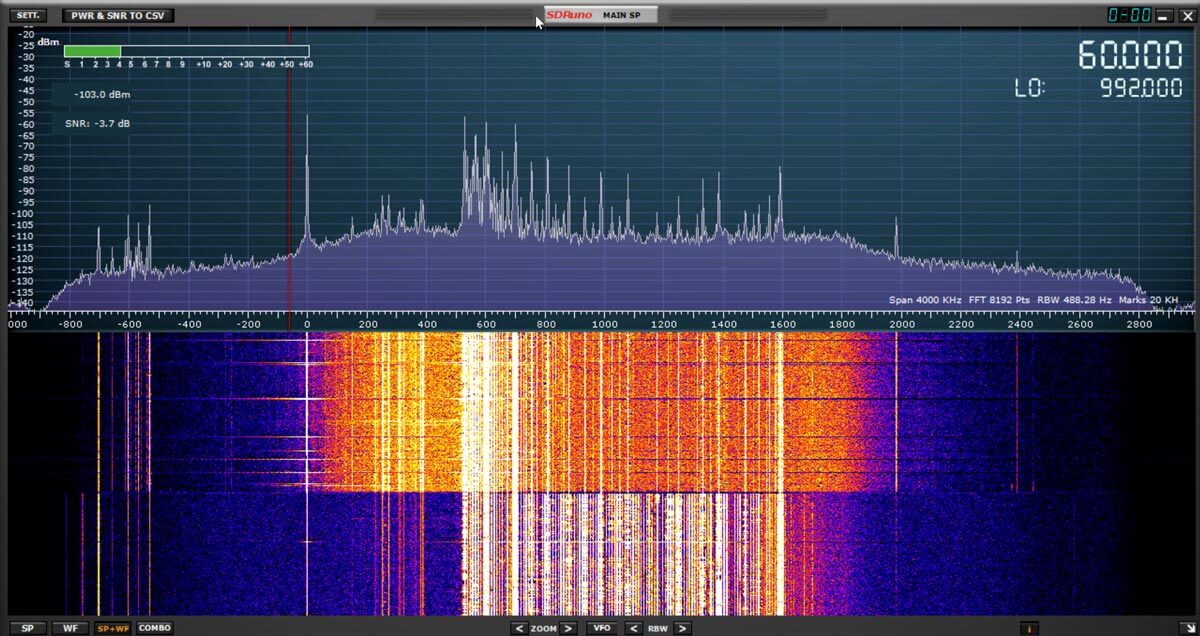

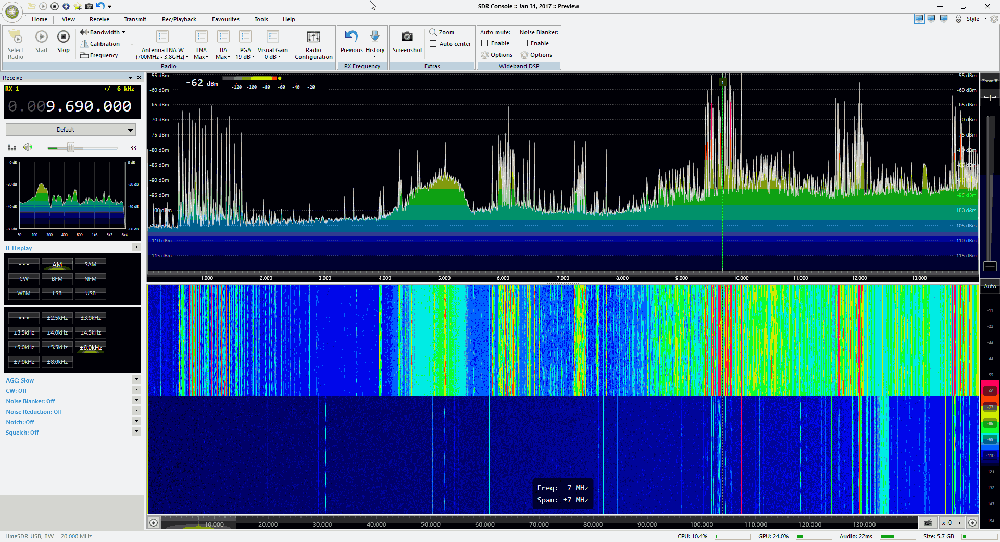

Medium Wave Broadcast AM Band

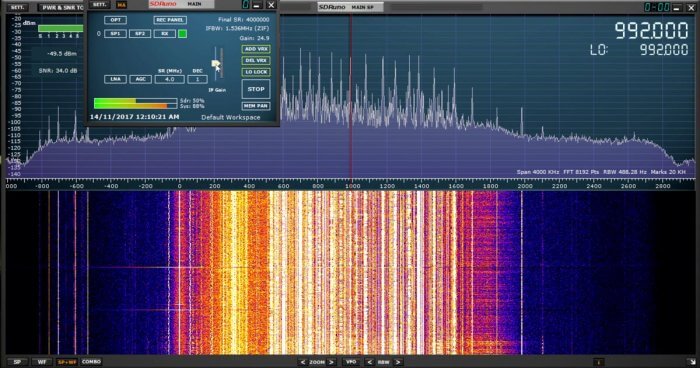

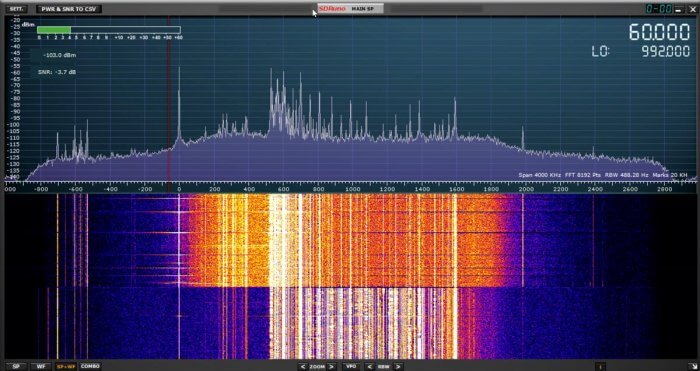

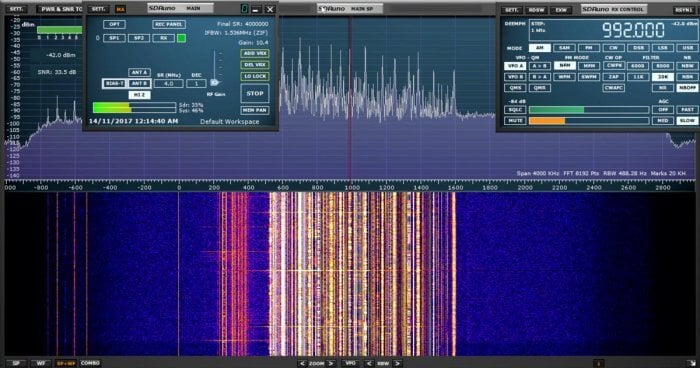

In the screenshots below we compare the three SDRs on the broadcast AM band which has some very strong signals. The RSP1 definitely shows signals of overloading and turning the gain down did not reduce the interference shown between 0 - 500 kHz.

The RSP1A on the other hand does not overload that easily. In the third screenshot we turn the MW notch on half way through the waterfall. The notch does not cover the entire AM band and signals at around 500 - 700 kHz are attenuated less. But turning it on does seem to do enough to solve most imaging problems as will be seen in the next tests.